The Era of AI Creation: Will It Replace Musicians or Become the Ultimate Assistant?

The Era of AI Creation: Will It Replace Musicians or Become the Ultimate Assistant?

Summary (TL;DR)

The evolution of AI music tools (like Suno, Udio) is breathtaking, not only generating high-quality demos but also beginning to offer detailed control. Facing this change, musicians' anxiety is real. This article delves into the current boundaries of AI capabilities, pointing out that while AI can produce technical "fast food," it lacks the emotional depth, aesthetic judgment, and cultural understanding unique to humans. The article suggests that future musicians should define themselves as "creative decision-makers," using AI as a powerful assistant to improve efficiency and creativity through human-machine collaboration (e.g., AI drafts, human decisions), thereby finding irreplaceable value in the AI era.

Last week, I visited a friend's studio and saw Suno open on his computer. He typed in a description, and in less than 15 seconds, a complete demo with vocals, bass, and drums came out. He said this was a proposal for a client; previously, it would take 2-3 days, but now he can produce 5 stylistic options in half an hour.

Seeing this scene, I felt a mix of emotions. As someone who has been in music production for a few years, I know what this means—efficiency improvement is good, but what really causes anxiety is the question swirling in my mind: If I'm just doing commercial background music or "functional music" like demos, how much value do I still have?

This anxiety is not unique. In the past six months, topics related to "AI Music" have been stimulating musicians' nerves almost daily in various communities. Some say this is "music democratization," where everyone can make music; others say this is the "end of art"—does typing a few keywords count as creation?

I think, rather than arguing, it is more important to see clearly what AI can do, what it cannot do, and how we, real musicians, can find our place in this new era.

What Can AI Actually Do?

In the past year, the evolution speed of AI music tools has far exceeded many people's expectations.

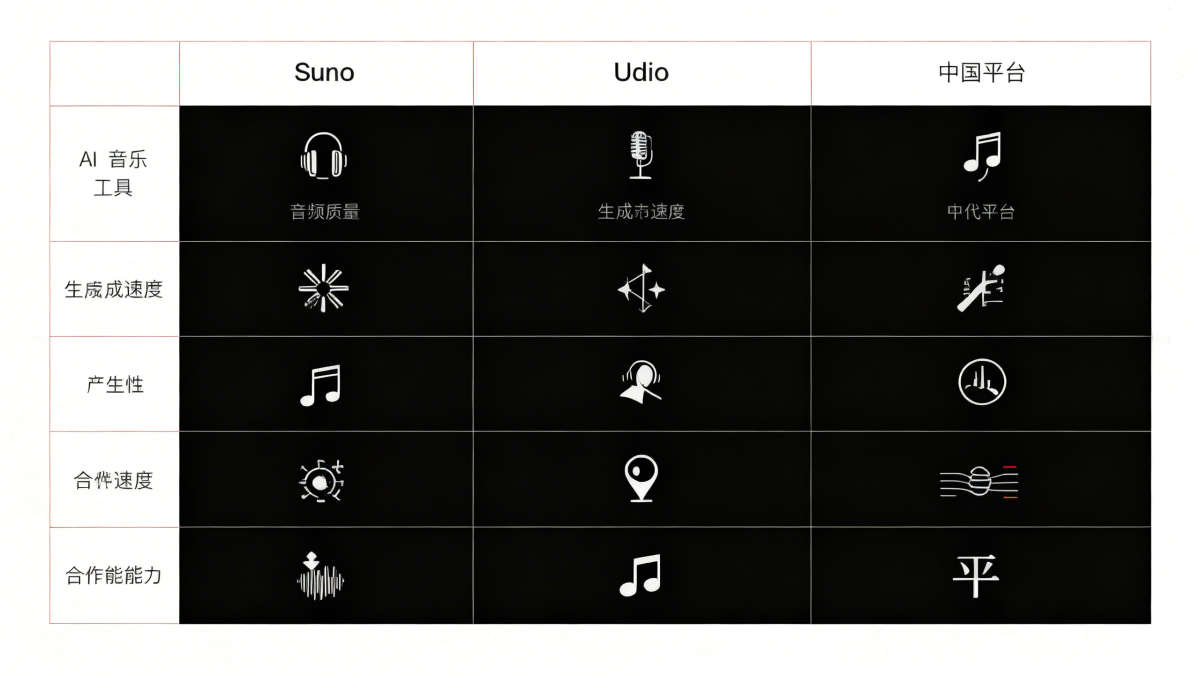

Leap in Generation Quality

Taking Suno as an example, the v5 version released in September 2025 has basically eliminated the obvious "mechanical feel" of the past. Vocals now have breath sounds, vibrato, and even adjust emotional expression according to the genre. Udio v4 has achieved 48kHz/24bit studio-level output, with significantly improved instrument separation, no longer the "mushy" sound of before.

Domestic platforms like NetEase Cloud and Tencent Music have also launched their own AI creation platforms. especially NetEase's XStudio, which already has over a dozen highly realistic AI singer voice banks. Connected with the copyright system, ordinary users can also quickly generate a complete song.

From "Magic Button" to "Creative Tool"

AI tools are no longer just "input description, wait for result." Suno now supports Mashups (blending styles of two songs), Personas (saving specific vocal characters for cross-track use), and can even generate a Drum Loop or a Piano Riff separately. Udio's Magic Edit allows you to precisely select a 5-second lyric line and regenerate just that section without affecting other parts.

These features mean that AI is transforming from a "one-click generator" into a "finely controllable production tool." You can export AI-generated vocals, bass, and drums as stems, then re-arrange and mix them in a DAW.

Practical Application Scenarios

In community discussions, professional musicians have summarized a "70% Rule": AI can complete 70% of the work in minutes, but the remaining 30% still requires human judgment and polishing.

A senior producer on Zhihu shared that he now uses AI to quickly generate multiple arrangement references, then selects the one with the best feel to re-record with real instruments or high-end sound sources. The initial conception stage, which originally took 2-3 days, is now shortened to half a day.

For content creators, AI solves the big problem of background music. The "Journey to the West Series" AI suites on Weibo frequently go viral, with ordinary netizens making a complete Chinese-style song in minutes. This was unimaginable before.

But What AI Cannot Do Is the Key

Seeing this, you might ask: Since AI is already so powerful, is there really a need for musicians to exist?

My answer is: Yes. And more than ever before.

"Soul" Is Not Metaphysics, It's a Real Absence

In discussions on Reddit and Zhihu, a high-frequency evaluation by professional musicians is that AI works are "cyber fast food." This is not a disparagement, but an accurate description.

AI-generated music can often "tug at the heartstrings of a group," but lacks the "real emotional heartbreak of an individual creator." For example, if you ask AI to make a song about heartbreak, AI can do all the typical characteristics of "heartbreak songs" very well—holding back tears, lonely late nights, flashback scenes. But it hasn't really been heartbroken, so it doesn't have those unique, unreplicable details and breaths that belong only to you.

An independent musician said at an industry annual meeting: "AI can make works that are technically flawless, but the value of music lies not in being flawless, but in those 'accidents' and 'imperfections'." Like Kurt Cobain's raspy voice, or Jay Chou's mumbled singing style, these things that don't fit "beautiful" standards are precisely their labels.

Aesthetic Judgment and Cultural Background

AI can imitate styles, but it doesn't know "why doing this is right."

A producer shared a case: He was making a New Chinese Style wedding soundtrack for a client. The AI-generated version had no technical faults, but it felt "a bit off." Later he realized that AI piled up Chinese style elements too fully, making it look cheap. Real New Chinese Style needs "white space," knowing when "not adding" is more advanced than "adding." This understanding of culture and aesthetic judgment is something AI cannot do yet.

Complexity of the Real World

AI's training data comes from existing works, and its logic is "statistical regularity." But music creation often needs to break rules.

For example, a very specific scene: scoring a suspense film, the director wants to use "dissonant violin sounds" to create unease in a certain scene, but the second half needs to shift to warm memories. This subtle adjustment based on specific plot, visual imagery, and even actor expressions requires multiple rounds of communication between the producer and the director. AI cannot participate in this complex collaboration.

Copyright and Commercial Issues

There is also a practical issue: pure AI-generated works currently do not possess copyright. According to the latest regulations in early 2026, only works with "human-machine co-creation" and substantial human expression can be protected. This means that if you want to commercialize AI works, you still need the intervention of musicians.

Best Partner: Practical Paths for Human-Machine Collaboration

So, how to find the correct way to open AI? In the practice of the past six months, some effective collaboration modes have formed.

Mode 1: AI Drafts, Human Decisions

This is currently the most common usage. Use AI to quickly generate 20-30 versions of different styles, then use your aesthetic experience to select, fuse, and modify. For example, if you like the intro of version A but prefer the chorus of version B, you can stitch them together and then polish the details.

A producer said: "Previously, for the initial conception of a song, I might have to try a dozen directions in my head. Now I let AI make all these dozen directions, and I decide after listening. It's like having a very fast assistant during the Sketch stage."

Mode 2: AI Generates Materials, Human Assembles

Use AI to generate individual elements—Drum Loops, bass lines, vocal samples, chord fragments, and then assemble them like building blocks yourself.

In a DAW, you can use AI-generated elements as a Sample library. For example, if you need a Trap style 8-bar drum beat, previously you might need to spend money to buy a Sample Pack or program it yourself. Now with Suno's Samples function, you can generate 20 different options in 30 seconds.

Mode 3: AI as Style Reference, Human Remakes

When you want to try an unfamiliar style, you can first use AI to generate a "reference tape" to hear the general arrangement idea, instrument configuration, and rhythm, then re-record with real instruments or high-quality sound sources.

An independent musician shared: "I didn't do Afrobeat before and wasn't sensitive to the drum patterns and bass lines of this style. I let Udio generate 10 Afrobeat style references, listened for a few days to get the feel, and then made it myself with real drums and bass. AI was like giving me a 'crash course'."

Mode 4: Human Sets Framework, AI Fills Details

You first build the main melody, chord progression, and section structure in the DAW, then use AI to fill in those parts that are time-consuming but do not determine the core expression—such as drum beats, percussion, and ambient sound effects.

This method is particularly suitable for commercial projects. You control the core creativity, AI helps you handle those repetitive labors, ensuring the originality of the work while significantly improving efficiency.

The Future of Musicians: What Is Irreplaceable?

Writing this far, I want to return to the question at the beginning of the article: Will AI replace musicians, or become the ultimate assistant?

My answer is: It depends on how you define yourself.

If You Define Yourself as a "Technical Executor"

If your value is only "accurately executing client requirements," then you will indeed feel a lot of pressure. Because AI is catching up rapidly in this regard. Especially for projects like "give a reference, make a similar one," AI can already do very well.

This is why the quotes for bottom-tier music producers have been "halved" after 2025. But this is not the end of the industry, but the beginning of occupational stratification.

If You Define Yourself as a "Creative Decision-Maker"

Then your core competitiveness lies in: aesthetic judgment, emotional expression, cultural understanding, and grasp of specific scenes. These are things AI currently cannot do.

Future top musicians may not necessarily be the best technically, but they must be the ones who understand "why do this" the best. They will use AI as a tool, but the final creative dominance remains in their own hands.

Now Is the Window for Transformation

The industry consensus in 2026 is: AI will not eliminate the music industry, but it will completely reshuffle it. Now is that window period—those who are willing to learn, try, and find the human-machine balance will gain a huge competitive advantage.

Regarding how to cope, my advice is: Spend a month deeply experiencing Suno, Udio, or domestic platforms to find usage that suits your workflow; deepen your understanding of a certain style, improve aesthetic judgment and communication skills; clarify which parts of your work are "human expressions"—don't just use an AI-generated song directly, at least have your decisions, your modifications, and your imprint in it.

Final Words

The essence of music has never been "making a song," but "expressing a person." AI can make technically perfect sounds, but it has no life experience and no emotional needs. This is why no matter how technology advances, those who really have something to say remain irreplaceable.

In the AI era, musicians will not be eliminated. But those musicians who are unwilling to think about "where lies my irreplaceability" might be.

🎧 Ready to Return to a Pure Music Experience?

Let iPlayer be the home for your lossless FLAC collection and start your high-fidelity auditory journey.

- 🔊 Exclusive Mode, Pursuit of Ultimate Sound Quality

- 📂 Powerful Folder Management

- ✨ Pure Interface, Return to the Essence of Listening